The implied equity risk premium is a squiggly circle

But its study nevertheless builds our mental model

Professor Damodaran recently shared his regular update on The Price of Risk: With Equity Risk Premiums, Caveat Emptor!. As usual, there is much to learn from his hard work. Still, I'm struck by the illusion of precision found in all discounted cash flow models: they generate precise values that still prove later to be inaccurate1. How do I know this?

Like the implied ERP, sell-side price targets also discount the terminal value

Take last year's sell-side coverage by Morgan Stanley (MS) of one of my largest holdings, Coursera (COUR). In the span of one year, their price target plummeted 67% from $53.00 to $17.00. Um, thanks guys. The stock was apparently Attractive at $53 so at $17 it should be rated Supermodel but alas was still merely Attractive!?

In fact, the company's fundamentals did not deteriorate over this period. So what explains the abrupt crash of a price target that is supposed2 to be impressively forward-looking? You will recall the main reason—at least superficially—because it was a painfully shared event: in the fourth quarter of 2021, after it became clear that inflation was not transitory, multiples began to contract. And they contracted most severely for unprofitable high growers. Over the year (CY2022), each high-tech sell-side report came with a new price target reduction. When a reason was offered, if it was offered at all, the reason was some variation on this (emphasis mine),

"We are lowering our terminal FCF multiple from 25x to 20x, reflecting the further pullback across software and EdTech multiples in recent months." —Coursera 1Q22 Results Commentary, Morgan Stanley, April 28th, 2022

It turns out their price target is largely a function of the terminal value's multiple. This is also true of Professor Damodaran’s implied equity risk premium; aka, implied ERP. With respect to the influential variables, the dynamics are similar. The difference is that MS swings the present value (price target) as output given the terminal multiple and discount rate as inputs; while the professor’s method swings the discount rate as output given the present value and terminal multiple effectively as inputs. I sincerely mean to be neither critical nor profound. I only want to highlight an implicit pseudo-circularity. (At the end of this post, I’ll illustrate with a dead simple example.)

Visualizing a plausible dispersion of the implied ERP

Due to what I am tempted to call the implied ERP’s tautological dispersion, squiggly circularity, or self-referential ranginess—and wanting to justify to myself why I haven’t taken the ERP more seriously—I wanted to briefly, visually explore the sensitivity of the professor’s implied ERP. My R code is here or here3. Again, this is not an attempt at refutation; it is an arbitrary exploration of the specific hypersensitivity of the ERP to its assumptions.

His method employs a two-stage dividend discount model where the first stage (five years) is explained by growth vectors rather than a single constant growth assumption, G1. The key assumption is the sustainable growth rate ("the expected growth rate to use after year 5") which is set by variable G2 in my code.

To summarize the implied ERP method (and to omit most of the contributing detail that doesn’t matter for the simulation):

During the initial five years, the core inputs are an Earnings vector, {ER(1) … ER(5)}, a Cash Payout vector, {CP(1) … CP(5)}, and because cash flow CF(i) = ER(i) * CP(i), a resultant Cash Flow vector, {CF(1) … CF(5)}.

The remainder of all future cash flows are impounded into the terminal value—reduced to a single lump sum—such that we have a complete assumption for the future cash flow stream.

We can also observe (and therefore assume) the present value; i.e., the stock index (S&P 500) index level of 4588.96

Much like an internal rate of return, IRR, given the future cash flows and current price, we can infer the discount rate4

My simulation mirrors this method (and, indeed, solves for 4.44% under identical but rounded assumptions). However, mine solves for many (10,000) implied ERPs according to a Monte Carlo simulation (MCS) where I arbitrarily introduce assumption variability as follows:

To the earnings and cash payout vectors (in my code, ER_vector and CP_vector), I’ve attached standard deviations. To the sustainable growth rate, G2, I’ve also attached a standard deviation. This changes them from constants to random normal variables; my goal is to model the uncertainty of future projections. For example, in the professor’s analysis, CF(3) = $219.3 because ER(3)*CP(3) = $273.71 * 80.12% = $219.3. In my simulation, this $219.3 is the mean CF(3) in a vector, but it becomes the mean of a random normal variable with a conservative σ/μ of 10%. Specifically …

To assume variability, I decided to arbitrarily assume a 10.0% coefficient of variation (CV) because I am thinking that reflects a “tight” or low variation. In this way, the random distribution of CF(3) is N($219.3, $21.9^2). Similarly, the key sustainable growth rate, G2, as an input in the simulation has a distribution given by N(4.0%, 0.4%^2) such that its 2-sigma range is roughly 3.2% to 4.8%.

So the simulation conservatively randomizes the earning vector, the cash payout ratio vector and the sustainable growth rate (while keeping constant the present value and riskfree rate because they are not predicted). Further, please note that I did not introduce any correlation between the random vectors/variables; this is further conservative, as we might reasonably expect an adverse correlation structure. To introduce positive correlation should increase the implied ERP’s dispersion. (In truth, I got a bit more dispersion in the implied ERP than I expected, precisely because I expected some “cancelling” per my “independence” assumption).

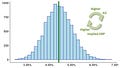

To me, the key choice here is the CV as my universal random input. As mentioned, I selected 10%. Using my seed value, set.seed(379), the mean implied ERP is approximately the same as his mean (i.e., 4.42%). My standard deviation is about 0.00610 or 0.61%. My simulation's distribution is approximately normal. Here is the histogram:

Per the quantiles, as we might expect of an approximately normal distribution ~ 95% of the ERPs lie within two standard deviations, or specifically {3.25%, 5.63%}.

This does illustrate my intuition, which is: even when given a fairy tight range (10% CV) on randomness of future vectors, the implied ERP is nevertheless quite a dispersed quantity! Numerically, the 10% CV translates into σ = 60 bps in the implied ERP. Put simply:

Even if our prediction about the future turns out to be pretty good, the implied ERP is still a bit squiggly

Next, I plot the non-random relationship between the sustainable growth rate, G2, and the implied ERP. This is because I was too lazy to try and solve it analytically. Maybe I should have known it would be linear but I didn’t immediately anticipate that.

Then, for fun, I reintroduced randomness to the initial five-year stage (i.e., the earnings and cash flow vectors, but neither the riskfree rate nor the present value). This is my quick attempt to visually capture the rangy ERP: if you accept a sigma of 10% for the initial growth vectors, then the ERP scatters accordingly with still 80% association to the sustainable growth rate, G2.

The above is no profound piece of analysis. It’s just a visualization to reflect how I concretely perceive this implied ERP: anywhere from ~3% to ~6% (the blue line) but mostly (>70%) a function of the sustainable growth rate assumption, G2, with conditional dispersion of fully σ = 60 bps, a dispersion itself levered by a (modest) CV of 10% in the initial cash flow vectors. In short, it is not precise. And—I’ll go further —even if we can somehow retrieve its exact value today (e.g., 4.44%), its inherent fragility renders it very much a full-fledged stochastic process: as we go forward in time, the index price (aka, present value which is an input) will fluctuate rapidly but also (less rapidly) will the two prediction vectors in addition to the critical, far-out sustainable growth assumption.

Why go to the trouble of computing the implied ERP?

This begs the question: What exactly is the ERP, and how should we use it? I believe the practical replies are, respectively, that the ERP is the asset class’ (equity’s) expected (ex ante) excess return, and therefore it informs asset allocation decisions. Maybe I’ll write about that later. For now, my academic response is:

Like many financial models—often described as “wrong but useful”—the goal is not to obtain a precise estimate; e.g., 4.44% implied ERP. Instead, the value of the model lies in our examination of its underlying assumptions, historical data (e.g., the professor’s model contain dividends and buybacks), mechanics, dynamics, and relationships. As investors, this examination is a journey itself that helps us to refine our own mental model.

P.S. At the top, I said DCF-derived sell-side targets have much in common with the implied ERP. Here’s what I meant. Imagine that I sell you today a note to pay you $100 at the end of four years. If the discount rate is 8.0% per annum with annual compound frequency, the theoretical price of the note today is $100/1.08^4 = $73.50. I wrote …

The difference is that MS swings the present value (price target) as output given the terminal multiple and discount rate as inputs; while the professor’s method swings the discount rate as output given the present value and terminal multiple effectively as inputs.

… and here’s how I would compare the two against that example:

In the sell-side (MS) analogy, if you want to lower the price target (aka, present value) as an output, you can either:

Drop your estimate of the future $100 (here it’s the terminal value of sustained future cash flows in a lump sum). For example, if you revise the future $100 down to $94, the PV = 94/1.08^4 = $68.26. Or/and,

You can keep the future $100 but increase the discount rate. If you increase the discount rate from 8.0% to 9.55%, we go down to the same PV ~= 100/1.0955^4 = $68.26.

In the implied ERP analogy, you can swing the discount rate as output while maintaining the PV of $73.50 by slightly shifting the terminal value (in this case, as a very sensitive function of the sustainable growth rate, G2):

If the terminal value drops only -$4 to $96, the implied ERP drops to 2.9% (= 6.9% - 4%) per $96/1.069^4 = $73.50, and similarly

If the terminal value increases +$4 to $104, the implied ERP jumps to 5.1% (=9.1% - 4%) per $104/1.091^4 = $73.50.

I’m playing a bit loose with language. In statistics, we sample for estimates (e.g., sample mean) and hope to infer something or much about an unknowable truth; e.g., the population’s “true” mean. Precision is low variability or dispersion in our estimates: over repeated trials, we get estimates that are close to each other (as darts that are tightly clustered in the popular darts diagram). Accuracy is when our average estimate (disperse or not!) is near to the true value (the population parameter that we probably cannot observe). See wikipedia. I doubt that implied ERPs are either precise or accurate, but solving for 4.44X% might at least connote or suggest some precision.

Aside from, you know, that the actual share price dropped, momentum shifted (double emphasis) and the authors might be following stock prices rather than actually leading. I know. It’s a shocking disappointment because you were so invested (pun intended) in sell-side’s published price targets. Could they really lack durable conviction? They are fundamental value analysts, after all. Some even have CFAs. Oops, I meant to write that “some are holders of the right to use the Chartered Financial Analyst® designation” (I’m in that group, so I really shouldn’t have to look it up yikes). Yes, on reflection—nevermind these doubts—let’s assume they changed the fine assumptions in their DCF model and the output crashed as a matter of independent and coincident observation. Phew, because we don’t want price targets hanging around that are too different than the traded prices. We’d look … silly.

It was GPT-4’s idea to utilize the optim() function after I realized the built-in IRR() was not available for the discounting operation: GPT-4 wrote the first draft of the objective_function(), which I then edited. To write the entire code page, I estimate GPT-4 reduced my own time required from an estimated ~3 hours (my original allocation because I am slow due to rust) to <45 minutes. This is an absolutely epic experience. Some of its R code works out-of-the box, some does not. And you do need to know how to code (in my opinion) or you can’t fix, or rewrite, its code to your purpose. I am well-trained in ggplot2 so I didn’t need it for the visualizations (which are here super basic). So I am drinking the AI kool-aid here, and with respect to R code, it’s for a specific reason: in general, I am slower (sometimes much slower) than a professional coder. And for harder functions, I will get stuck. I can conceive that an expert programmer may have no for need GPT-4. But helps me to be less slow and it gives me the feeling that I won’t get stuck. GPT-4 increases my capability and confidence. When learning to write code, it is easy to experience feelings of defeat, and to get discouraged. To write code is to write bugs and to be constantly interrupted with little setbacks, and getting help is not always easy. The tool’s ability to help boost confidence seems huge to me. This makes it an accelerant with respect to learning how to write code.

It is almost an IRR but not quite an IRR due to the second appearance of the discount rate in the denominator of the terminal value’s discounting function: (r-g) in the professor’s equation, or (R-G2) in my code.